CoreWeave (Deep dive)

Extensive deep dive research going in-depth into all parts of the investment case.

CoreWeave’s IPO is one of the closest watched in a while.

Apart from being one of the biggest IPOs in recent times, CoreWeave has been closely tied to Nvidia and the AI trade.

For anyone invested in the AI theme, I think CoreWeave’s S-1 filing provides further insights into the AI ecosystem and is very useful for any AI investor.

Furthermore, as we go deeper into CoreWeave’s S-1 filing, we are also able to shed light into the dynamics and business model of the company.

This is what the deep dive will cover:

Background

A platform specifically built for AI

The CoreWeave advantage

Deep partnership with Nvidia

Business model

Committed long-term take-or-pay contracts

Unit economics

Just-In-Time funding

Customer base

Remaining performance obligations

Customer concentration

Competitive landscape

Competitive landscape – Hyperscale cloud providers

Competitive landscape – Specialized AI Cloud Providers

Management team

Management team – Founders

Management team – Recent hires

Management team – Key long-time employees

Shareholding

Shareholding – Founders

Shareholding – Magnetar Capital

Shareholding – Nvidia

Financials

Capital structure and debt profile

Final thoughts and implications

Background

While CoreWeave is mainly known for their involvement in the AI world, it is interesting to note that it was not the case in the start.

When it was founded in 2017, the company was actually called The Atlantic Crypto Corporation.

After the 2018 cryptocurrency crash, the company pivoted to providing cloud-based GPU infrastructure, rebranding as CoreWeave in 2019.

In 2020, the CoreWeave cloud platform was launched.

Before 2022, the company had limited revenues and most of that came from its cryptocurrency mining offerings.

The company discounted its Blockchain Mining and Management Services business on September 30, 2022, and strategically shifted all resources and computing capacity that was used to mine cryptocurrencies, particularly Ethereum, towards the cloud computing business.

The CoreWeave cloud platform is designed to deliver cutting-edge software solutions for accelerated computing, particularly tailored for AI workloads. The platform leverages high-performance GPUs to provide scalable and efficient cloud infrastructure, enabling enterprises and AI labs to run complex computations and machine learning tasks more effectively.

CoreWeave's cloud platform is built on a Kubernetes-native architecture, which allows for seamless management of large-scale GPU clusters. This architecture ensures high availability, reliability, and security for AI applications.

The platform supports a wide range of AI and high-performance computing use cases, making it a versatile solution for various industries

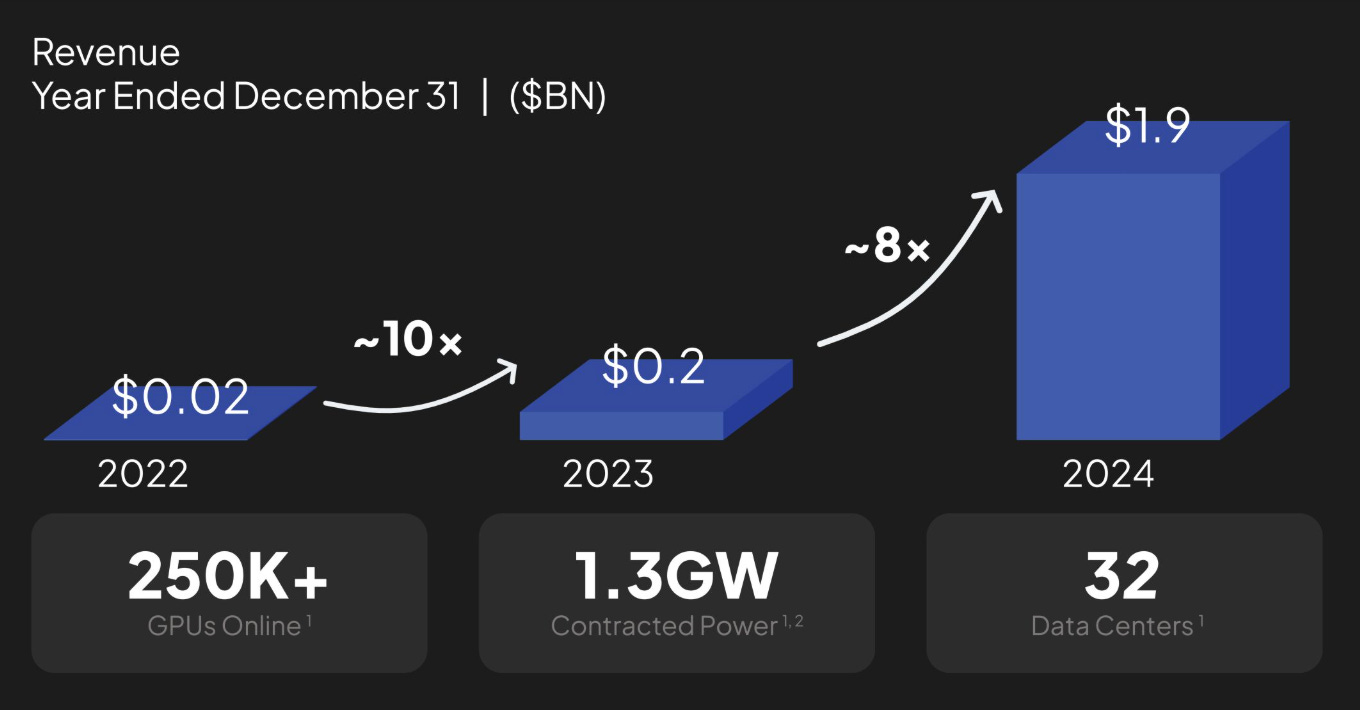

CoreWeave has 32 data centers as of December 31, 2024, running more than 250k GPUs and requiring more than 360 MW of active power.

The pivot from cryptocurrency to AI could not have come at a better time given the exponential growth in demand for accelerated computing and AI workloads.

CoreWeave saw revenues grow 10x from $20 million in 2022 to $200 million in 2023, and it grew another 8x to $1.9 billion in 2024, which really highlights the strong demand for AI workloads during this period from AI labs and AI enterprises.

I’ll go through the customer base and analyze it in greater detail below in the “Customer base and concentration” section, but I would note that some of the largest and fastest growing AI labs and enterprises are CoreWeave’s customers, which include Cohere, IBM, Meta, Microsoft, Mistral, NVIDIA, and OpenAI.

A platform specifically built for AI

The CoreWeave Cloud Platform was specifically built and developed by the company to be the infrastructure and application platform for AI.

Before going into the strengths and advantages of the CoreWeave Cloud Plaform, it is useful to understand the requirements of the AI infrastructure buildout.

The generalized clouds operated by the hyperscalers were meant for general purpose use cases, ranging from e-commerce, search, database and more.

They were not designed to meet the higher requirements of AI and definitely not optimal for running AI workloads.

These generalized clouds are run on CPU-based compute but with the AI buildout, we now know that we require GPU-based compute infrastructure which has fundamentally different build requirements ranging from capacity, performance and scalability requirements.

GPU-based compute infrastructure required for AI has very large-scale requirements of tens of thousands of GPUs, many thousands of miles of high-speed networking cables and interconnects, immense power and storage requirements to form the superclusters needed for training and serving AI models.

Clearly, to design and construct a supercluster requires a solid global supply chain management capability.

Due to the complexity of the AI infrastructure, it also requires purpose-built software to unlock performance and efficiency, especially at superclusters. The software is required to help monitor the AI workloads that are running on the AI infrastructure to ensure failures and downtime are minimized.

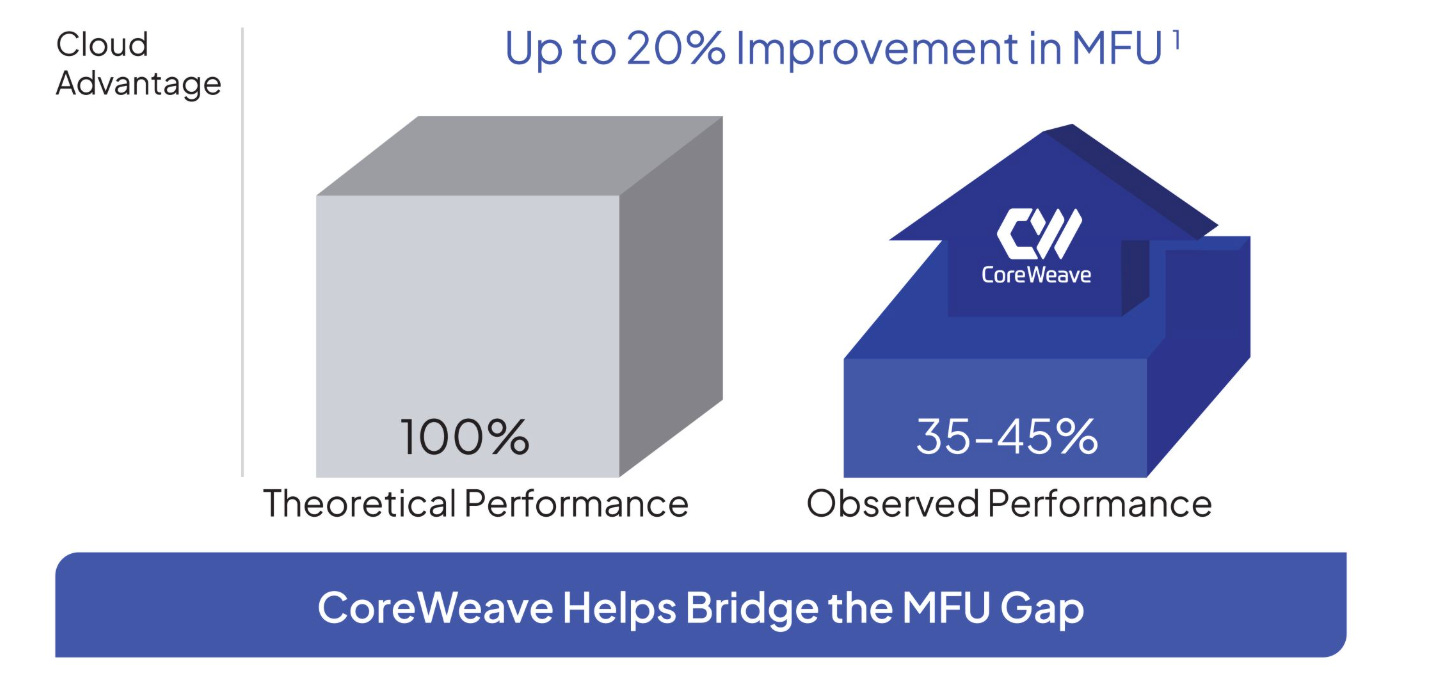

Another difficulty in ramping AI infrastructure is the Model FLOPS utilization (“MFU”) efficiency gap.

Model FLOPS utilization is a measure of the observed throughput compared to the theoretical system maximum if it operates at peak FLOPs.

Empirical evidence showed that due to the complexity of AI infrastructure, a large majority of the compute capacity embedded in GPUs is lost to system inefficiencies, with observed levels of performance only at about 35% to 45%.

A company like CoreWeave that is able to minimize this efficiency gap will lead to better performance and efficiency of the AI infrastructure.

The CoreWeave advantage

Because the CoreWeave Cloud Platform is purpose built for AI, it has been designed fundamentally to be optimized for AI workloads, whether it is in the software or the hardware.

The result is a highly efficient AI infrastructure.

The CoreWeave Cloud Platform was found to deliver up to 20% improvement in system MFU over comparative benchmark MFU performance, which probably means the cloud infrastructure run by hyperscalers.

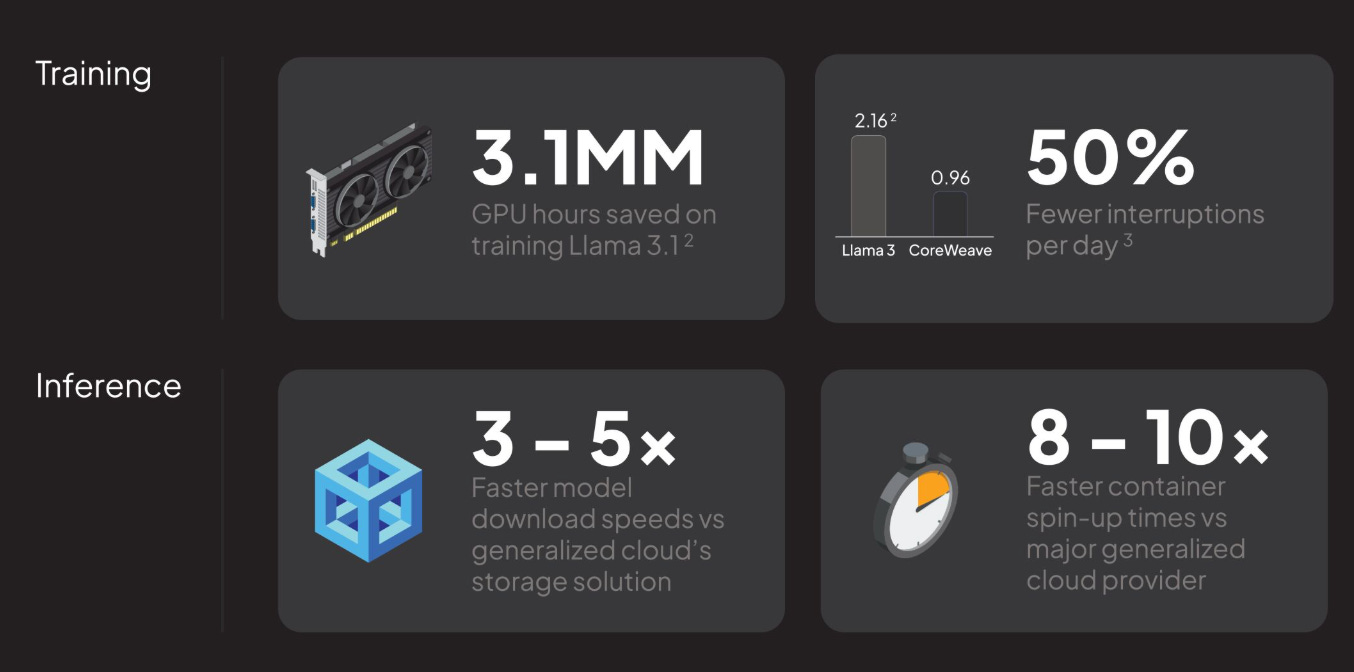

With this highly efficient AI infrastructure, CoreWeave has been able to scale up very rapidly and do so at a high level, meeting the requirements of training and inferencing AI models.

The CoreWeave Cloud Platform has broken performance records, including setting an MLPerf record that was 29 times faster than competitors in 2023.

As can be seen below, from training to inference use cases, through using the CoreWeave Cloud Platform, customers can significantly improve performance, efficiency, cost and user experience.

CoreWeave cites faster access to the latest AI technologies and infrastructure as one of its key advantages, which would of course include its access to the newest GPUs from Nvidia.

To be fair, this is true as CoreWeave was the first cloud provider to deliver the Nvidia H200 Tensor Core GPUs to market, and it was also among the first to deliver a large-scale NVIDIA H100 Tensor Core GPU cluster and GH200 clusters. It was also the first cloud provider to make NVIDIA GB200 NVL72-based instances generally available.

CoreWeave also claims that it can very quickly provide the AI compute capacity to customers in as little as 2 weeks after it receives the systems from its OEM partners like Dell and Super Micro.

Deep partnership with Nvidia

To be able to be the first to offer Nvidia GPUs to customers, CoreWeave would need to be a key partner to Nvidia.

Firstly, Nvidia is a major shareholder of CoreWeave, owning 17.9 million shares, which makes up 5% of the company after the IPO.

Secondly, Nvidia has a Master Services Agreement with CoreWeave signed in April 2023 where CoreWeave is meant to provide Nvidia with its infrastructure and platform services when Nvidia requires it. As of end of 2024, Nvidia has paid CoreWeave $320 million for this.

Thirdly, CoreWeave and Nvidia have a partnership to deploy Nvidia’s GPUs. CoreWeave’s value add to Nvidia here is to help diversify its customer base and help get Nvidia’s GPUs as quickly as possible to customers.

Lastly, based on the S-1 filing, we can also see that CoreWeave’s more than 250k GPUs today are all Nvidia GPUs due to its obligations to current customer contracts.

There’s a risk here with 100% of GPUs coming from Nvidia as there may be delays affecting Nvidia that will directly affect CoreWeave’s business.

There’s also another risk as its current customers have contractually specified the use of Nvidia GPUs, but if CoreWeave is required to use GPUs from outside of Nvidia, the company specified that its platform and solutions may not be as performant, and it could result in higher costs and lower margins.

Business model

I think it is important to first understand how CoreWeave as works as a business and earns revenues.

This is the fundamental knowledge an investor needs to know before diving deeper into the various aspects of the business.

Committed long-term take-or-pay contracts

Firstly, a significant majority of revenues are derived from committed contracts that are multi-year in nature.

As of December 31, 2024, committed contracts account for 96% of CoreWeave’s revenues.

Through these committed long-term contracts, customers typically reserve capacity over 2 to 5 years, on a take-or-pay basis.

As of December 31, 2024, CoreWeave’s committed contracts had a weighted-average contract duration of 4 years.

These multi-year contracts are based on a "take-or-pay" basis, which means the customer is obligated to either take delivery of the goods or services or pay a penalty for not doing so.

In terms of pricing, these committed contracts generally have a fixed price for the contract duration, which is measured on a dollar per contracted GPU per hour basis and are billed monthly based on the customer’s reserved usage commitments.

There are 3 stages to the whole process of a customer signing a committed contract and CoreWeave recognizing revenues.

Firstly, when a contract is signed, a customer usually makes a prepayment to CoreWeave.

Secondly, after having signed the committed contract, CoreWeave would make purchase orders for infrastructure purchase and installation.

The sequence here is important because CoreWeave significantly derisks its business model by ensuring that it signs a committed contract with a customer and thereby ensures it has concrete demand before investing and paying for the AI infrastructure.

The installation usually takes 3 months, and when installation is complete, this is when the contract duration begins, and revenues are recognized.